Practical AI & Computing

On Your Terms

Private AI assistants, local inference infrastructure, and automation built on your own environment with clear data control.

Why Not Cloud AI?

Cloud AI is fast to start, but for organizations handling sensitive data or scaling usage, the trade-offs can increase over time.

| Factor | Cloud AI | Private AI (On-Premises) |

|---|---|---|

| Data Privacy | Data sent to external servers | All data stays on-premises |

| Operational Cost | Per-token fees scale with usage | One-time hardware investment |

| Latency | Internet-dependent, variable | Sub-100ms local inference |

| Compliance (UU PDP) | Requires data processing agreements | Supports stronger control boundaries |

| Availability | Dependent on provider uptime | Works offline with local resiliency planning |

| Customization | Shared generic models only | Fine-tune on your own data |

Compliance Readiness

Our architecture approach can be aligned to common regulatory and governance frameworks for privacy, healthcare data, and security management.

UU PDP (Indonesia)

Supports data residency and governance controls for personal data handling within Indonesian regulatory expectations.

GDPR (EU)

Helps organizations address lawful processing, data minimization, and stronger control over cross-border data exposure.

HIPAA (US healthcare)

Useful for healthcare workflows requiring stricter protection of patient data and auditable access controls.

ISO 27001

Aligns infrastructure operations with information security management practices and repeatable control processes.

ISO 27701

Extends privacy management controls for personal data governance on top of core security practices.

ISO 42001

Provides an AI management system framework for risk, accountability, and governance in operational AI deployments.

Ideal Use Environments

Designed for organizations that need stronger control over data, predictable operations, and practical long-term cost planning.

Healthcare & Clinics

Private AI support for intake summaries, clinical documentation assistance, and controlled data handling in regulated environments.

Logistics & Warehouses

Operational AI for detection, alerts, and process visibility across warehouse, production, and facility workflows.

Education & Research

Internal assistants for knowledge search and student support while keeping academic data inside institutional infrastructure.

Solution Architectures

Local AI Assistant

OpenWebUI with your choice of open-source language model deployed on your own hardware for internal team use.

- Policy & Document Q&A

- Content Summarization

- Internal Knowledge Base

- Image ideation workflows

Computer Vision Monitoring

AI-powered visual detection running on local infrastructure for monitoring, event detection, and operational visibility without external video processing.

- Intrusion Detection

- Quality Control

- Crowd Analytics

- PPE Compliance Monitoring

Intelligent Automation

Automation workflows across internal systems, operations, and surveillance to improve response speed and reduce repetitive manual work.

- Predictive Maintenance

- Dynamic Lighting & HVAC

- Automated Reporting

- Multi-site Sync

See It In Action

A private AI assistant running entirely on local infrastructure, accessible from any browser on your network.

Loading demo...

How It Works

A typical private AI deployment where your data stays within your own environment.

User Terminals

Web browser / desktop

Local LLM Server

Inference Engine + OpenWebUI

Knowledge Base

Your documents & data

All data stays within your network. Can be designed to support UU PDP, GDPR, HIPAA, and ISO-aligned controls.

Full-Stack AI Deployment

A complete on-premises AI deployment spans multiple layers — from physical hardware up to the user-facing interface. Every component stays within your own environment.

Interface Layer

AI Chat Interface

Web-based, team-ready assistant

Monitoring Dashboard

System metrics, alerts, time-series

Operations Console

Camera feeds, sensor views, logs

Application Services

LLM Inference

Local language model serving

Computer Vision

Detection & analytics pipeline

RAG / Knowledge

Vector search + embeddings

Automation Engine

Workflow & integration layer

Container Runtime

Container Runtime

Isolated, reproducible service deployment across all AI workloads

System Software

GPU / AI Accelerator Drivers

NVIDIA CUDA · AMD ROCm · Intel oneAPI

Operating System

Linux — Ubuntu LTS / RHEL compatible

Hardware

AI Inference Server

GPU: NVIDIA / AMD / Intel Arc

CV Processing Node

GPU or NPU accelerated

Storage Server

NVMe / NAS / Object Storage

Internal Network

10 / 25 / 100 GbE LAN fabric

Data Sources & Inputs

IP Cameras

RTSP / ONVIF streams

IoT Sensors

MQTT / Zigbee / Modbus

Documents & Files

PDF, Office, internal data

User Terminals

Browser / desktop / mobile

All components run on your own hardware within your network. No data leaves your facility.

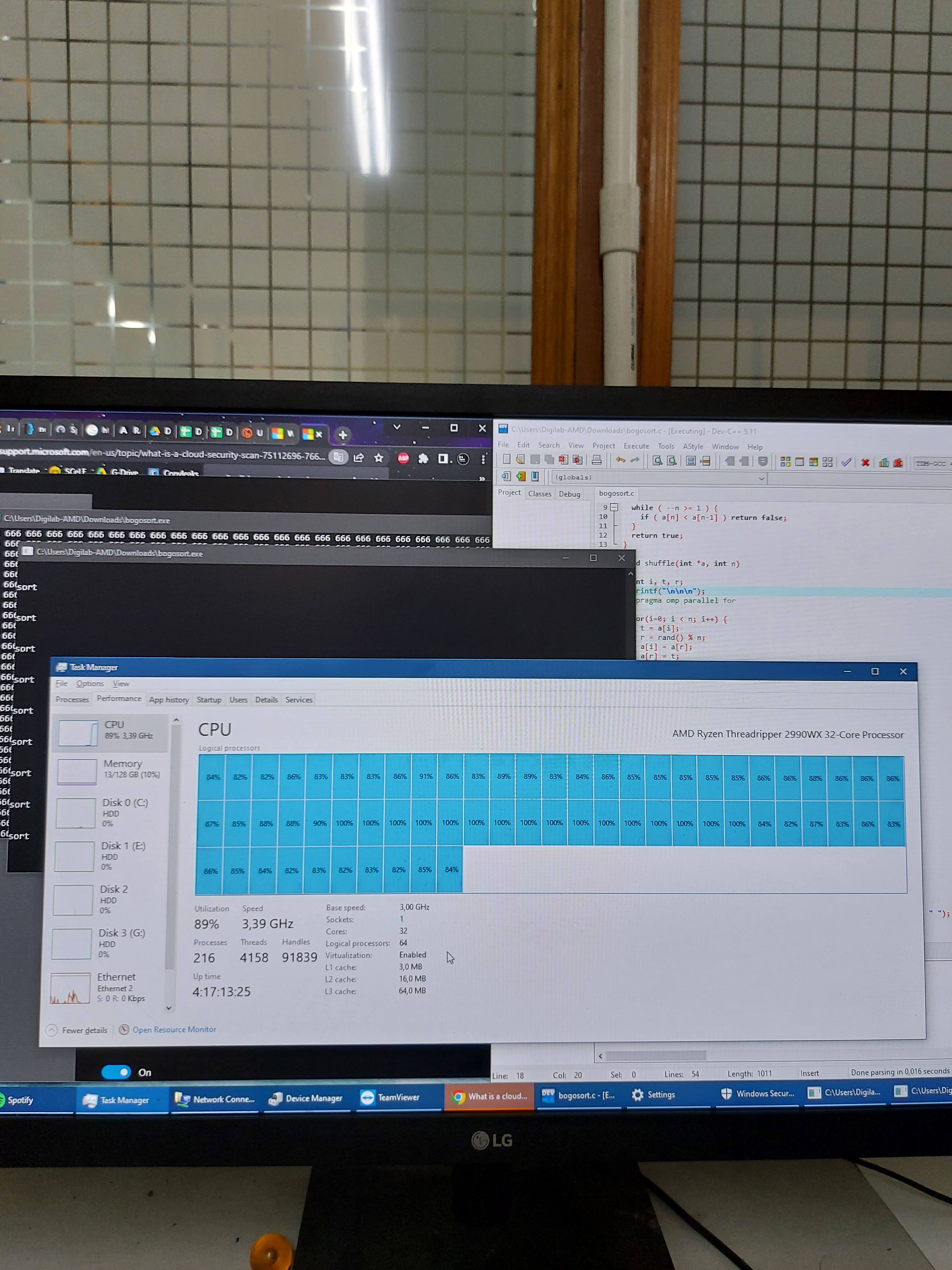

Built on Elite Hardware

We design and build GPU workstations and inference servers for private AI workloads with practical sizing, clear upgrade paths, and long-term maintainability.

Our Hardware Builds

AI Inference Server

High-Performance Workstation

Ready to Build Your AI Infrastructure?

We help you assess requirements, architecture options, and deployment priorities for a private AI system that fits your organization.